Apache Flink is a platform for open-source stream processing framework, which is used by accurate, high performing, always-available data streaming Apps.

Execution Models:

We have two types of Execution Models:

- Batch: It releases computing resources after completing execution and run time in a small amount of time.

- Stream: As soon as data Made it is Executed and Processed continuously.

Flink confidently depends on streaming model, which always suits for unbounded data sets, streaming execution means a continuous flow of processed data which is continuously updated. However sequential arrangement between data execution models and datasets provides many benefits for accurate performance.

Get in touch with OnlineITGuru for mastering the Big Data Online Training

Datasets:

Flink gives us two Datasets like :

1)unbounded: These are Infinite Datasets which are added at the end Continuously.

2)Bounded: This type is datasets are unchanged and finite.

Real –Time data sets are called as a batch or Bounded, so data can be stored in a list of directories in HDFS, or in Apache Kafka which is a log-based one. Now I will show you Important examples of Unbounded datasets.

1)Log data of Machine

2) Markets In Finance Sector

3) measurements Provided by Physical Sensors.

4)Interaction with Clients with Mobile and Web Applications.

||{"title":"Master in Big Data Hadoop ","subTitle":"Big Data Hadoop Training by ITGURU's","btnTitle":"View Details","url":"https://onlineitguru.com/big-data-hadoop-training.html","boxType":"reg"}||

Why should you use only Flink not Others Sources: It is open source framework for distributed Stream processing Method.

- Performing in a large scale way on lakhs of nodes amplitude and latency Characteristics.

- Results are accurate when data arrived lately.

- It doesn't allow fault data while maintaining applications.

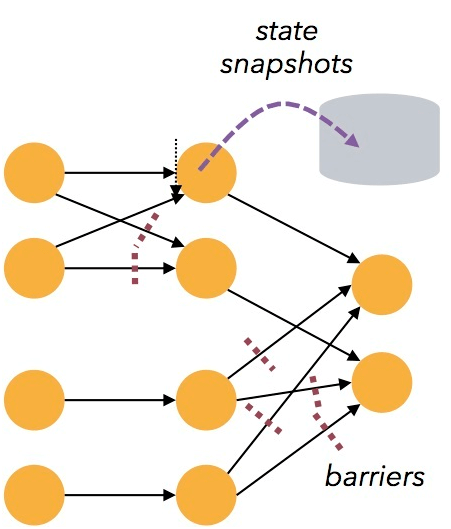

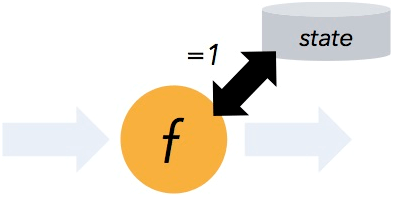

For Instance Flink Follow the rules of stateful computations like exactly once, it shows the progress of data which has bee process over time and by the way Flink Inbuilt contains checkpoint Architecture, which shows equally in a time Of an application’s state in the failure, the below Image Shows how it works. For more Info On Stateful Computations .

Savepoints in Flink, which provides state Versioned Mechanism, which will be very much useful for updating applications with no downtime.

Savepoints in Flink, which provides state Versioned Mechanism, which will be very much useful for updating applications with no downtime.

Cluster Mode In Flink, which is helpful for running high-end Clusters, attached with so many lakhs of nodes.The below Image Shows the Standalone cluster mode.

Flink light-weighted Fault tolerance

which enables the system to produce high throughput rates,and it never loses any data from failures.

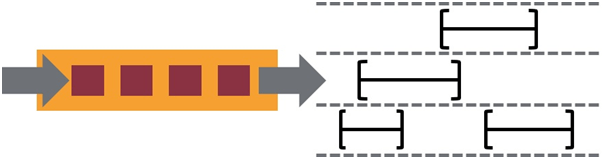

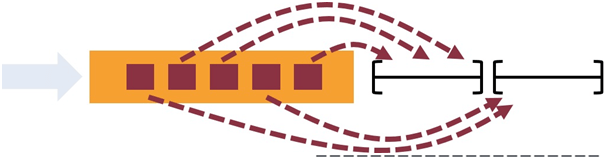

Flink is enabled by Convenient Windowing which is depended by the duration of time, for controlling critical stream patterns updated Triggering options are used.

Session Time Semantics used in Flink for stream processing And Windowing. Session Time makes simple to compute accurate progress when the session islate.

Flink’s Architecture :

FrameWorks and Flink:

Making process of Flink is Done by the below steps:

Sink Data: Where Flink provides data after processing

Transformation:It the Processing Step While Flink modifies Input Data.

Source Data: Flink Process that Incoming Data.

Data Flow programming Model Of Flink:

Levels of Abstraction :

The most reduced level reflection basically offers stateful streaming. It installed into the Data Stream API by means of the Process Function. It permits clients openly process occasions from at least one streams, and utilize predictable blame tolerant state. Furthermore, clients can enroll occasion time and preparation time callbacks, enabling projects to acknowledge modern calculations.

The low-level Process Function incorporates with the Data Stream API, making it conceivable to go the lower level deliberation for specific operations as it were. The Data Set API offers extra primitives on limited informational collections, similar to circles/cycles.

The Table API is an explanatory DSL revolved around tables, which might be progressively evolving tables (while speaking to streams). The Table API takes after the (broadened) social model: Tables have a pattern connected (like tables in social databases) and the API offers tantamount operations. for example, select, venture, join, amass by, total, and so forth.One can consistently change over amongist tables and Data Stream/DataSet, enabling projects to blend Table API and with the Data Stream and DataSet APIs.

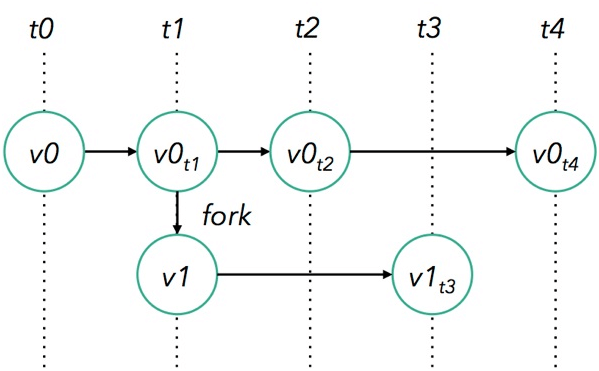

Data Flow and Programs:

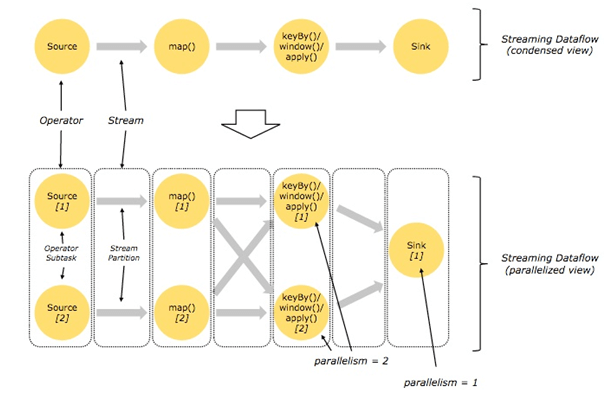

Thus Flink programs made up of streams and transformations, where the stream flow of data records. Most Important Transformation takes input as one or more streams as Input and gives one or more output.

Therefore Flink programs executed by mapping process by streaming data flows. Every data flow opens with one source and ends with one or more sinks. However the data flow related to directed a cyclic graphs.

Data in Parallel Mode:

However Projects in Flink inalienably parallel and disseminated. Amid execution, a stream has at least one stream parcels, and every administrator has at least one administrator subtasks. Therefore administrator subtasks are free of each other and execute in various strings by and conceivably on various machines or holders. Fpr more Projects on Flink .

For example Streams can transport information between two administrators in a balanced design, or in a redistributing design:

Most Important Balanced streams save the dividing and requesting of the components. For Instance that implies that subtask of the guide administrator see indistinguishable components in a similar request from they delivered by subtask in the same way of the Source administrator.

Advantages of Flink:

1) For example low latency and High performance. 2) support for out of orders and event time. 3) streaming windows with high flexibility. 4) For instance Back pressure Continuous streaming Model. 5)However light weighted snapshots by fault-tolerance. 6) Therefore single runtime for streaming and Batch processing 7) managed Memory. 8) program optimizer.

Prerequisites:

Prerequisite for learning Big Data Hadoop .It’s good to have a knowledge of some Oops Concepts. But it is not mandatory. Trainers of online guru will teach you if you don’t have a knowledge of those OOPs Concepts Become a Master in Flume from OnlineITGuru Experts through Big Data Certification