In today’s world, as technologies updates, harm to its also updated. Moreover, developers and destroyers were increasing proportionally. That means as developers were inventing new things hackers were destroying the old and new data. So for this security plays a major in protecting the data from the hackers. Today, in this article, I would like to share Role of Apache Ranger in Hadoop platform.

Know more on Hadoop Admin from OnlineITGuru through Hadoop Admin online Course

Why is security important?

The data that we experience is not from a single source. Moreover, this incoming data is not in a single format. This data is in multiple formats like Flat files.CSV’s etc. The data in these files cannot be predictable. It may be in different forms like employee list (or) attendance record etc. But this, not the case all the time.It may also contain sensitive information like passwords.There is no problem if the data containing the employee list is seen by the third person like an intruder .problem arises if the person sees the sensitive information like company credentials etc. At this point, security plays a major role.

||{"title":"Master in Hadoop Admin Training","subTitle":"Hadoop Admin by ITGURU's","btnTitle":"View Details","url":"https://onlineitguru.com/hadoop-online-training-placement.html","boxType":"reg"}||

How to solve the security issue?

As discussed above security plays a major role while the transfer of data across the network. This security problem from the third person like intruders is solved by the Apache foundation.They worked hard for the maintaining the security across the network. Finally, they came with the solution by introducing Apache Ranger. Let us know briefly How does Apache ranger provide security.

Role of Apache Ranger in Hadoop platform:

Apache Ranger is a framework, to enable, monitor and manage comprehensive data security across the Hadoop platform. It provides a comprehensive approach to secure the Hadoop cluster. The motto of Apache range is to provide the data security across the Hadoop ecosystem. It extends the baseline features across Hadoop cluster from the batch, interactive SQL and real-time in the Hadoop platform. With the invent of Apache YARN, Hadoop platform can support a true data architecture. Data security within Hadoop needs to support use cases for multiple data. Moreover, it also provides the framework for the central administration of security policies and monitoring of user access.

Architecture :

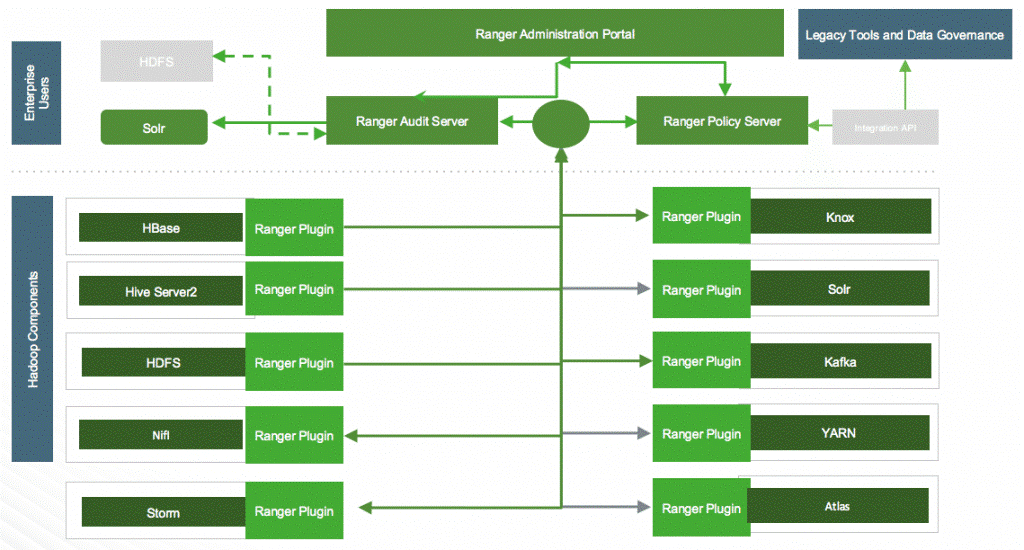

The architecture of Apache ranger shows the workflow across the Hadoop platform. Let us know the secret behind how does Apache ranger provides security through its architecture.

This architecture has three components .let we discuss each component in detail.

Ranger admin Portal :

It is the central interface for security administration .here users can create and update the policies in the database.Additionally, this portal consists of an audit server. This audit server, send the audit data collected from the plugins to store in the HDFS (or) in the relational database.

Ranger Plugins :

A plugin nothing, but a simple lightweight program which was already customized. Here the plugins nothing but the java programs which were predefined. Here, the apache ranger is a plugin which was written using Java programs.These plugins pull the policies from the central server and store them in a local place.When the end user requests to access the database, these plugins evaluate the users and gives access depending upon the validation result. Additionally, this collects the data from the user request and follow a separate thread to send it back to the audit server.

User group sync :

This framework provides the user synchronization to pull users and groups from UNIX (Or) LDAP (or) active directory.This information is stored in ranger portal and also used for the policy definition.

Goals :

This framework has certain goals. Let us know it briefly

All the security-related tasks in the central server (or) Rest – API through the centrlzed administartion

Standardize authorization method across all the Hadoop components.

Increased support for different authorization method through role-based access control, attribute based access control etc.

I hope you were clear with the Importance of Ranger while transferring the data across the hadoop platform.

Achieve the goals of Hadoop Admin from OnlineITGuru through Hadoop Administration online training Bangalore

Recommended Audience:

Software developers

Team Leaders

Project Managers

Database Administrators

Prerequisites:

In order to start learning Hadoop Administration no prior requirement was needed. Its good to have knowledge of any technology required to learn Big Data Hadoop and also need to have some basic knowledge of java concept. It’s good to have a knowledge of Oops concepts and Linux Commands.