Apache Cassandra is the ultimate solution, when you need high measurability, and more capability without downing your Performance. Linear motion Scalability and progress fault-tolerance on chip-level and Cloud-Infrastructure make it Suitable Source for moto-Critical data. Cassandra helps for reproducing across so many Data centers, by providing minimum existence for your clients and the peace of mind of gaining that you will withstand local unavailability.So my dear Techies now I will give small update that is Apache Cassandra 3.0

What are the new features in Apache Cassandra 3.0?

Cassandra released tick-tock schedule.

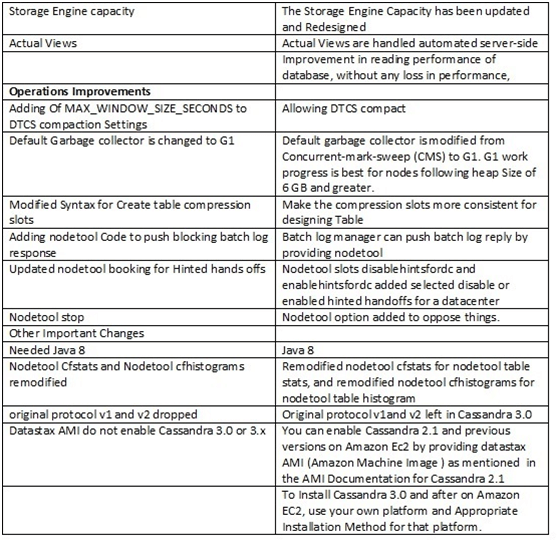

New features in Cassandra 3.0

Analyzing the architecture of Cassandra:

Cassandra is Architecture to manage big data operations on so many nodes, with no single point of malfunction. Its design is founded on the organizing that system and hardware malfunctions.That can occur Cassandra locates the problem, of failure by point to point enlarged system on the homogeneous nodes, by this all the data distributed across so many nodes in the cluster using point to point gossip communicating protocol. A serial wrote commit log on every node gets wrote activity to ensure data time period. Data is now Indexed and wrote to memory structure called memorable, which shows a write-back cache. Every time the memory design is full, the data is gone to disk In an SS TABLES data file. All writes are predefined made of parts. All writes are automated and partitioned and resembled on the cluster.

In fact Cassandra timely consolidates SS tables using a process management known as compaction, discarding data pointed for terminating with a tombstone. To Ensure every data across the cluster with various repair mechanics employed.

Get in touch with OnlineITGuru for mastering the Big Data Hadoop Training

Favorable circumstances of NoSQL Cassandra:

Decentralized:

Every hub in the group has a similar part. There is no single purpose of disappointment. Information circulated over the group (so every hub contains diverse information). Especially however, there is no ace as each hub can benefit any demand.

Scalability:

Generally Read and compose throughput both increment straightly as new machines included, finally with no downtime or intrusion to applications.

Fault-tolerant :

Equally Important Data naturally recreated to numerous hubs for adaptation to internal failure. Moreover Replication over numerous server farms upheld. As a matter of fact Fizzled hubs supplanted with no downtime.

||{"title":"Master in Big Data Hadoop ","subTitle":"Big Data Hadoop Training by ITGURU's","btnTitle":"View Details","url":"https://onlineitguru.com/big-data-hadoop-training.html","boxType":"reg"}||

Tunable consistency:

Writes and peruses offer a tunable level of consistency, the distance from "composes never fall flat" to "hinder for all reproductions to be decipherable", Equally Important with the majority level in the center.

MapReduce bolster :

Generally Cassandra has Hadoop incorporation, with MapReduce bolster. For instance there is bolster likewise for Apache Pig and Apache Hive.

Query dialect:

CQL (Cassandra Query Language) presented. For example a SQL-like other option to the customary RPC interface. However Dialect drivers are accessible for Java (JDBC), Python (DBAPI2) and Node.JS (Helenus).

Recommended Audience:

Software developers

ETL developers

Project Managers

Team Lead’s

Business Analyst

Get in touch with OnlineITGuru for mastering the Big Data Training

Prerequisites:

Prerequisite for learning Big Data Hadoop. It’s good to have a knowledge of some OOP’s Concepts. But it is not mandatory. Trainers of online guru will teach you if you don’t have a knowledge of those OOP’s Concepts.